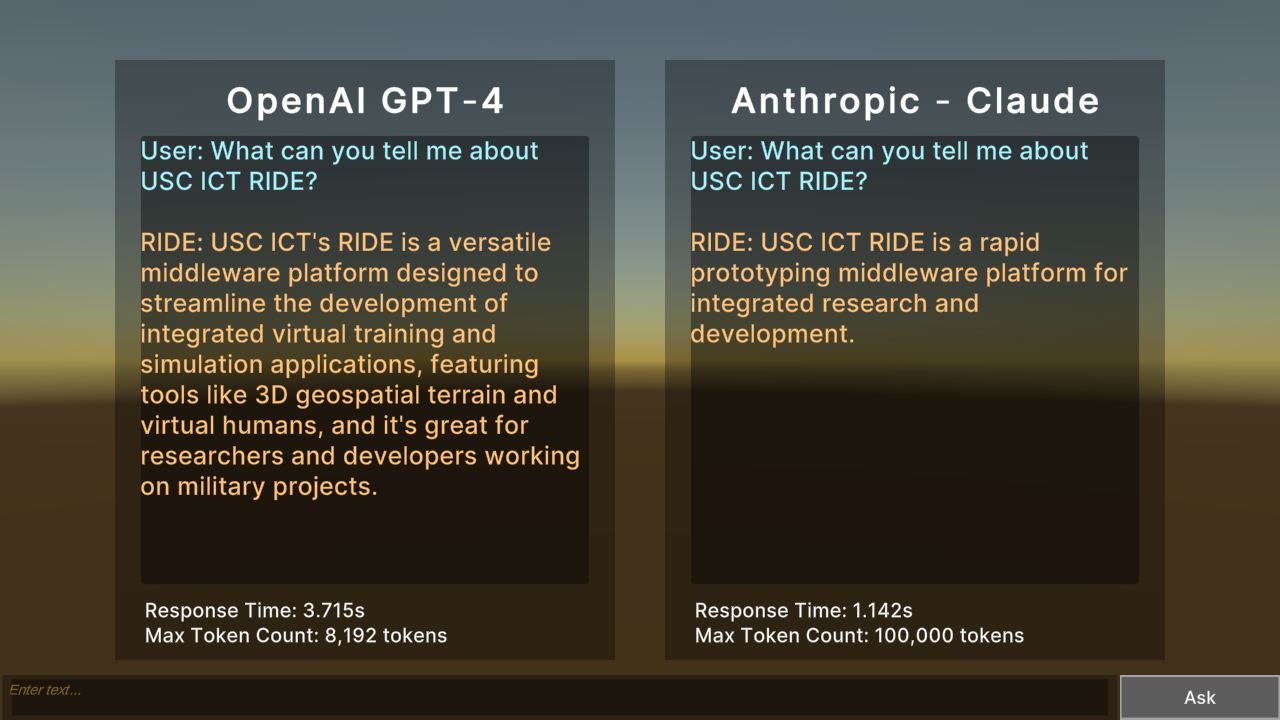

Demonstrates cloud-based large language models (LLMs) generative AI, comparing OpenAI ChatGPT against Anthropic Claude.

Shows how to leverage large language model generative AI services through REST calls, using RIDE’s common web services system.

Leverages RIDE’s generic NLP C# interface, primarily focused on question-answering interactions, with the principle generally working for user input and agent output. Optionally includes sentiment analysis and entity analysis.

IMPORTANT If you wish to use the ExampleLLM scene and related web services, you must update your local RIDE config file (ride.json). Please back-up any local changes to the file, including your terrain key. Next, restore your Config to default using the corresponding option in the Config debug menu at run-time, then reapply any customization and terrain key. Refer to the Config File page within Support for more information. |

Assets/Ride/Examples/NLP/ExampleLLM/ExampleLLM.unity

The ExampleLLM scene utilizes a customizable UI and interchangeable service providers through canvas, scripts and prefabs. Explore the objects in the Hierarchy view for the scene inside the Unity editor and source for Ride.NLP via the API documentation.

The main script is ExampleLLM.cs in the same folder.

Any NLP option implements the INLPQnASystem C# Interface, which itself is based on INLPSystem. These systems provide the main building blocks to interface with an NLP service:

OpenAIGPT3System m_openAIGPT3;

var openAIGPT3Component = new GameObject("OpenAIGPT3System").AddComponent<OpenAIGPT3System>();

m_openAIGPT3 = openAIGPT3Component;

The specific endpoint and authentication information is defined through RIDE’s configuration system:

m_configSystems = Globals.api.systemAccessSystem.GetSystem<RideConfigSystem>();

openAIGPT3Component.m_uri = m_configSystems.config.openAI.endpoint;

openAIGPT3Component.m_authorizationKey = m_configSystems.config.openAI.endpointKey;

The NLP system encapsulates the user question into an NLPQnAQuestion or NLPRequest object. This user input is sent through the RIDE common web services system. The results are encapsulated into an NLPQnAAnswer or NLPResponse object. A provided callback function allows a client (in this case this ExampleNLP) what specifically to do with the result. The NLP system itself handles much of the work, so that the client only requires one line to send the user question:

m_openAIGPT3.AskQuestion(userInput, OnCompleteAnswer);